Constructing Topical Concept Hierarchical Taxonomy of Tourist Attraction

Abstract

- A hierarchical co-clustering module by using non-negative matrix tri-factorization for allocating attractions and things of interest to topic when splitting a coarse topic into fine-grained ones.

- A concept extraction module for extracting concept of every topic that maintain strong discriminative power at different levels of the taxonomy.

理论数学表达

Non-negative Matrix Factorization

The model is to approximate the input attraction-ToI matrix with three factor matrices that assign cluster labels to tourist attractions and Things of Interest (ToI) simultaneously by solving the following optimization problem:

where $X $ is the input attraction-word content matrix, and $U ∈ R^{m×c}{+}$ and $V ∈ R^{n×c}{+}$ are orthogonal nonnegative matrices indicating low-dimensional representations of attractions and things of interest, respectively. The orthogonal and nonnegative conditions of the two matrices $U$ and $V$ enforce the model to provide a hard assignment of cluster label for attractions and things of interest. $H ∈ R^{c×c}_{+}$ provides a condensed view of $X$ .

The optimization problem above is not convex with respect to the three variables $U$ , $H$ and $V$ together. There is no closed-form solution for the problem. Next, we use an alternative scheme to solve the optimization problem.

This is 理论推导 link.

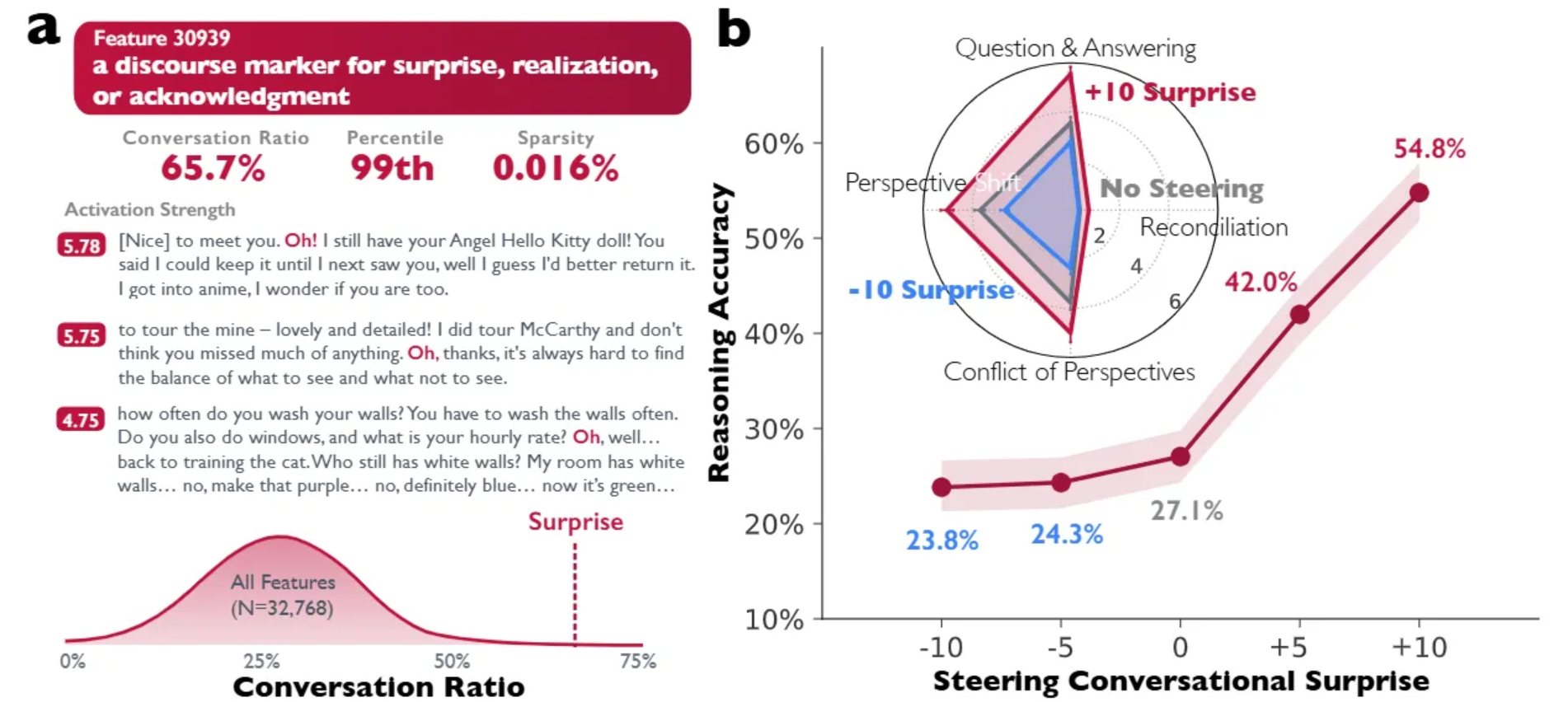

Concept Extraction based on TextRank Algorithm

PageRank

Graph-based ranking algorithms are essentially a way of deciding the importance of a vertex within

a graph, based on global information recursively drawn from the entire graph.

Formally, let $G=(V, E)$ be a directed graph with the set of vertices $V$ and set of edges $E$, where is a subset of $V \times V$ . For a given vertex $\boldsymbol{V}{i}$ , let $\operatorname{In}\left(V{i}\right)$ be the set of vertices that point to it (predecessors), and

let $O u t\left(V{i}\right)$ be the set of vertices that vertex $V{i}$ points to (successors). The score of a vertex $V_{i}$ is defined as

follows (Brin and Page, 1998):

where $d$ is a damping factor that can be set between 0 and 1, which has the role of integrating into the model the probability of jumping from a given vertex to another random vertex in the graph. The factor $d$ is usually set to $0.85$ (Brin and Page, 1998), and this is the value we are also using in our implementation.

TextRank

However, the graphs are built from natural language texts, and may include multiple or partial links between the units (vertices) that are extracted from text. It may be therefore useful to indicate and incorporate into the model the “strength” of the connection between two vertices $\boldsymbol{V}{i}$ and $\boldsymbol{V}{j}$ as a weight $w_{i j}$ added to the corresponding edge that connects the two vertices.

Concept Extraction

在这里,由于我们的模型已经提供了一个关于景点的层级结构,也就相当于对于每一个节点(除根节点以外),其先验知识就是其父节点的知识,也就是我们在抽取关键词作为节点概念的时候,一些在父节点就已经很出众的词语不应该成为子节点的概念。所以关于一个阶段来说,其概念抽取的时候应该选择在父子节点中概念重要程度增加最多的概念:

对树生成的约束

预剪枝

- 定义一个高度,当树达到该高度时就停止树的生长(Max Level)

- 定义一个阈值,当达到某个节点的实例个数小于阈值时就可以停止树的生长

后剪枝

定义一个目标函数,由损失项 (Loss term) 加上正则项 (Regularization term),以最小化结构性风险(Structural risk)

每个景点由一个向量表示,向量由词袋模型生成,所以两个景点向量之间的距离可以表示成:

我们用景点和簇中心向量距离的和来定义这个簇的混乱程度:

因为每一个节点中景点向量的向量空间是变化的,所以直接用决策树的剪枝策略是没有意义的,这里收到决策树代价复杂度剪枝方法的启发,做了一些调整:

- 因为节点之间的景点向量空间是不断变化的,所以在考虑剪枝的时候仅考虑本节点是否应该继续分割而不考虑继续分割后再进行分割而产生的变化,构建如下的损失函数:

$|T|$:该节点生成的簇个数;$N$:样本总数;$N_t$:第 t 个簇中的样本数量;$H_t$:第 t 个簇的混乱程度;$\alpha$:惩罚因子

对于每一个节点,聚类后:

若 $C{\alpha}(t) <= C{\alpha} (T)$ 则此次聚类没有意义,则不需要进行这次聚类。

补充

节点 X 矩阵的构建

TF-IDF 过滤

已知是属于这个节点的景点,我们将这些景点的每一条评论当做是一篇文档,对文档进行分词,然后对每一篇文档内的词计算 tf-idf 值,并且对 tf 值和 idf 值做一个上下界的阈值限制,将所有评论中剩下的词用做词袋模型。

布尔矩阵

我们尝试过直接用 tf-idf 值做 X 矩阵,也尝试了用布尔值做 X 矩阵,也尝试了用词频做 X 矩阵。最终发现 tf-idf 值做矩阵与用词频做矩阵效果都不理想,用布尔值(出现过这个词就为 1,没有出现过就为 0 )做矩阵效果是最理想的。

节点的迭代方法

因为每个景点属于 k 个类别的概率最后和都为 1,也就是矩阵分解出来的结果无法直接识别噪音(噪音型景点),所以我们采取的一个办法首先定义噪音型景点,然后迭代性的删除直到不存在噪音型景点为止。

- 我们认为噪音型景点的两个特征,一个是类别的归属度不高,一个是景点出现的特征词(词袋模型中的词)少。如果一个景点包含这两个特征,就判断其是噪音型的景点。

- 然后节点进行矩阵分解之后对结果进行分析,如果存在噪音型的景点,那么就需要将这些景点从这个节点的景点集合中删除,并且重新构建本节点的 attraction-toi 矩阵,然后重新进行矩阵分解。

- 重复以上的操作直到本节点不存在噪音。

Algorithm

Algorithm 1: Hierarchical Clustering for topic splitting

- Input: The dataset $D$ of topic $C$ ; the number of sub-topics $K$; threshold $N$.

- Output: Hierarchical topics

$Recursion \ function$

$X \leftarrow Prepare\ Matrix (C, D)$

$S_1 , S_2 , … , S_K \leftarrow Clustering \ for \ Topic \ Splitting(X)$

$loss \leftarrow Post \ Pruning \ Function(X, S_1 , S_2 , … , S_K)$

if $loss > 0$ then return \\停止条件之后剪枝

for $K \ from \ 1 \ to \ K$ do

$n \leftarrow item \ number \ of \ C$

if $n<N$ then continue \\停止条件之预剪枝

$CE_k \leftarrow Concept \ Extraction(C, S_k)$

$Recursion \ Function (S_k)$

Return $CE_1, CE_2,… , CE_k, S_1,S_2,…,S_k$

Algorithm 2: Clustering for topic splitting

- Input: A matrix $X$ of parent topic $C$ ; the number of sub-topics $K$; threshold $δ$ and $\gamma$.

- Output: $K$ sub-topics of $C$

$C_{sub}$ $\leftarrow$ $C$ ;

while True do

$ S1, S_2, … , S_K \leftarrow Nonnegative \ Matrix \ Factorization (S{sub}, K)$

for $k$ from $1$ to $K$ do

for t ∈ S_k do

$r( t, S_k ) ← probability\ of \ term \ t \ for \ S_k ;$

$d( t, S_k ) ← document \ frequency \ of \ term \ t \ for \ S_k ;$

if $r(t,S k ) < δ$ and $d( t, S_k ) < \gamma$ then

$S_k ← S_k − {t};$

$C^{‘}_{sub} \leftarrow S_1 ∪ S_2 ∪ … ∪ S_K;$

if $C^{‘}{sub} = C{sub}$ then

$Break;$

$C^{‘}{sub} \leftarrow C{sub}$

Return $S_1 , S_2 , … , S_K;$

Algorithm 3: Non-negative Matrix Factorization

- Input: ${X,U_0,V_0,T}$

- Output: ${U,V}$

Initialize $U = U_0 ,V = V_0 ,H ≥ 0$

while $Not convergent$ and $t ≤ T$ do

Update

Update

Update

$t=t+1$

end while

Algorithm 4: Concept Extraction based on TextRank Algorithm

- Identify text units that best define the node and its parent node, and add them as vertices in the graph, respectively.

- Identify relations that connect such text units, and use these relations to draw edges between vertices

in the graph. - Iterate the graph-based ranking algorithm until convergence.

- The intersection of the two graph vertices is the set of concept words, and the final score of the word is the score difference between the two graphs.

- Sort concept words based on their final score. Use the values attached to each concept word for ranking/selection decisions.

Constructing Topical Concept Hierarchical Taxonomy of Tourist Attraction